Two Years of Schema — The Evolution of the kDS PostgreSQL Database Design

by DL Keeshin

May 6, 2026

kDS Schema Data Flow — click circle to replay

Two years ago, the kDS Data Source Discovery (DSD) App existed as an idea and a handful of tables. Today it is a multi-schema PostgreSQL database with eight namespaces, dozens of tables, stored procedures, views, and a deployment pipeline that provisions a production-ready instance in minutes. That journey — from a single-schema prototype to a purposeful, layered relational architecture — tracks almost perfectly with the evolution of the app itself. The database did not grow randomly; it grew in direct response to the data flow the app was designed to map and manage.

This post traces that evolution across four broad phases, connects each structural shift to the blog posts that documented it, and explains the design philosophy that runs through all of it: the schema reflects the app's data flow.

Concept (Spring 2024)

The first version of the database had one objective: prove the concept. A single PostgreSQL schema held tables for organizations, roles, questions, and raw interview answers. Keys were natural — organization names, role titles, and industry descriptions served as identifiers because at that stage the goal was to get data in and get GPT-4 responses out. Referential integrity was secondary to velocity.

The first version of the database established the two foundational design commitments that have held through every subsequent

version. First, PostgreSQL was the right platform — not a document store, not a NoSQL layer, but a

fully relational ACID-compliant database whose strengths (integrity, mature tooling, powerful procedural

language) were exactly what a systematic discovery workflow required. Second, the interview response itself

would be stored as jsonb, preserving the multi-modal, free-form nature

of SME answers while keeping them inside a relational structure that could be queried, indexed, and

cross-referenced.

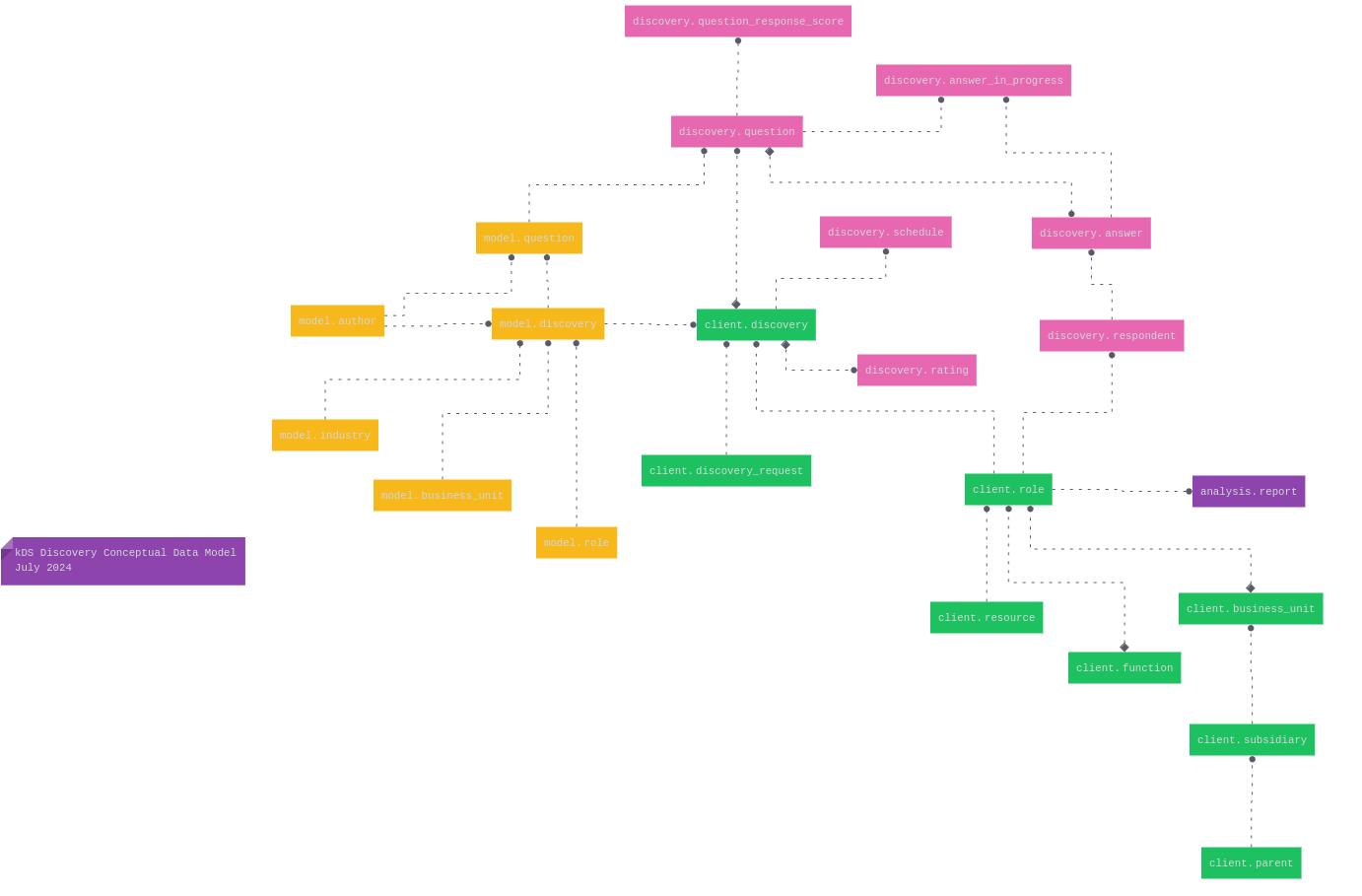

The diagram below shows the database as of July 2024 — relational structure in place, multi-schema separation just beginning.

Figure 1: kDS Discovery database schema — July 2024. The early multi-schema structure beginning to take shape.

Schema Separation and the Emergence of the Stage Pattern (Late 2024)

As the app matured, it became clear that raw intake data — contact forms, organization names,

role descriptions — needed its own home before being formalized for the interview engine.

That home became the stage schema.

The

stage schema is the intake layer. Its tables hold data exactly

as it arrives from the outside world — before the interview generation process begins. Accordingly,

its primary keys are natural keys: human-readable composite identifiers built from the

real-world attributes that make a record unique.For example,

stage.contact uses

PRIMARY KEY ("email_", "parent_", "subsidiary_", "business_unit") —

the combination of a person's email address within their organizational context.

Similarly, stage.business_unit is keyed on

("name_", "parent_", "subsidiary_"), and

stage.model on

("industry_hash_id", "business_function", "role_").

stage.parent uses the organization name itself as its primary key.

These natural keys make the staging layer legible to humans and to the app's intake logic —

no UUIDs to manage before the data has been validated.Crucially, the

stage schema carries no foreign key

constraints and no secondary indexes. The relationships between staging tables are

implied — enforced by application logic and naming convention rather than by the

database engine. Foreign keys and indexes are a property of permanence; they get assigned only when

records are promoted to their surrogate-keyed counterparts in client,

model, and interview. Deferring

that overhead to the promotion step keeps the intake layer lightweight and fast.Stage data is also temporary. Once interview generation begins and records are promoted to their permanent schemas, the corresponding staging rows are either removed or archived. The

stage schema is not a source of record — it is a loading dock

that clears after each delivery.

The separation also prompted the first formal schema taxonomy. Five namespaces began to take shape, each corresponding to a distinct zone of the app's data flow:

| Schema | Role in the Data Flow |

|---|---|

| stage | Intake and validation. Natural-keyed tables that receive raw contact and organizational data before interview generation. |

| client | The formalized organizational hierarchy: parent → subsidiary → business unit → role. All surrogate UUID keys. |

| model | The discovery interview templates: authored question sets keyed by industry, business function, and role. |

| interview | The live interview: generated questions, respondent answers, summaries, and the AI analysis pipeline outputs. |

| admin | Platform administration: accounts, access control, billing, projects, and AI prompt configuration. |

The blog series documented this transition directly. Early posts in the Designing a Data Source Discovery App series explained why namespace isolation was not just organizational tidiness but a functional requirement: schemas let different parts of the application operate on data at different stages of its lifecycle without stepping on each other.

The Key Transition: Natural to Surrogate (Early 2025)

The most conceptually significant design decision in the kDS database is the moment a record graduates

from the stage schema to the client

schema. That transition is not just a data copy — it is a key promotion.

When an admin initiates interview generation for a contact, the app reads the natural-keyed staging record — for example, a

stage.contact row identified by

(email_, parent_, subsidiary_, business_unit) — and promotes

it into the client schema, where it receives a UUID surrogate key

generated by gen_random_uuid().The same promotion applies at every level of the organizational hierarchy:

stage.parent(keyed by name) →client.parent(keyed byparent_id uuid)stage.subsidiary(keyed by name + parent name) →client.subsidiary(keyed bysubsidiary_id uuid)stage.business_unit(keyed by name + parent + subsidiary) →client.business_unit(keyed byunit_id uuid)stage.contact(keyed by email + org context) →interview.respondent(keyed byrespondent_id uuid)

stage schema carries neither — its

inter-table relationships are implied, and its records are too short-lived to justify index overhead.

Once a record lands in client or interview,

the database engine takes over enforcement: foreign keys link

client.role to client.business_unit,

interview.respondent to client.role,

interview.question to client.interview,

and so on through the full relational graph. BTREE indexes on the most-queried foreign key columns

are created at the same time.Once the surrogate key is assigned, every downstream table —

interview.question,

interview.answer, interview.summary,

analysis.by_role — joins on UUID, not on string identity.

The staging natural keys are retired with the staging rows themselves; the surrogate keys govern

everything that comes after.

This two-phase key design reflects a broader insight: the discovery app is itself a pipeline, and the

database schema should make that pipeline legible. The stage schema is

the intake dock; the client and interview

schemas are the production floor. The key transition is the handoff between the two.

Early 2025 also brought the formalization of the insert-only approach, documented in

the blog post Part 15: A Primarily Data Insert Only Approach. Rather than updating records in place,

the app inserts new rows wherever possible. The one major exception — the client.interview

table, whose start and end dates cannot be known at generation time — is handled by a PostgreSQL

trigger that mirrors every update to client.interview_history, preserving

the full audit trail.

The Full Eight-Schema Architecture (2025–2026)

By mid-2025, the database had reached its mature form: eight schemas, each representing a distinct functional zone in the app's data flow. Three additional namespaces joined the original five.

| Schema | Added | Purpose |

|---|---|---|

| analysis | 2025 | Cross-interview AI synthesis: by_role and by_business_unit tables aggregate summarized interview results across respondents, surfacing patterns and dependencies at the organizational level. |

| reference | 2025 | Stable reference data: industry codes (NAICS-derived, stored as hash IDs for efficient joins) and geographic locations. These tables are populated at deployment time and shared across stage, client, and model. |

| temp | 2025 | Transient working storage for in-progress operations: summary control state, batch billing staging, and admin account provisioning before promotion to admin. |

The analysis schema represents the database's most direct expression

of the app's end-to-end purpose. After raw answers flow through interview.answer

and are summarized into interview.summary and

interview.summary_by_topic, the AI pipeline's output is lifted one

level higher: analysis.by_role consolidates summaries across all

respondents who share a role, and analysis.by_business_unit does the same

at the organizational unit level. The consolidated_summary column in

both tables stores the GPT-4-synthesized result as jsonb, alongside

UUID array columns (source_summary_id_set,

interview_id_set) that trace every result back to its source interviews —

a full provenance chain from raw answer to strategic insight.

The Model Schema: Template Library for the Interview Engine

The model schema deserves particular attention because it represents

the authored, reusable template layer that the interview engine draws from. A

model.discovery record defines a discovery interview template:

industry, business function, role, author, and project scope. Its child table,

model.question, holds the canonical question set for that template,

with syntax_ stored as jsonb to

accommodate rich question structures.

When an interview is generated for a specific respondent, the model questions are instantiated as

interview.question records, each carrying a foreign key back to its

model.question origin via model_question_id.

This separation of template from instance is the database's way of ensuring that a single, peer-reviewed

question set can serve many respondents across many engagements without duplication.

The stage.model and stage.model_question

tables mirror this pattern in the staging layer. A new discovery model starts life in staging —

keyed naturally by (industry_hash_id, business_function, role_) —

and is promoted to model.discovery (UUID-keyed) once it has been

reviewed and approved, following the same natural-to-surrogate key promotion that governs all other

staging-to-production transitions.

The Prompt Architecture: Database-Driven AI Configuration

One of the more forward-looking additions to the admin schema is

admin.prompt_setting. As documented in the blog post

Part 17: Migrating Prompts to Jinja2 , the app moved away from hard-coded LLM prompts to

database-stored Jinja2 templates. Each row in admin.prompt_setting

holds a named, versioned prompt template that the Flask application retrieves at runtime. This means

that prompt tuning — adjusting how GPT-4 is asked to analyze interview responses, generate questions,

or produce executive summaries — requires no code deployment, only a database update.

The prompt is the configuration; the database is the configuration store.

The Email Workflow: Stage as Operational Ledger

In version 2.26, the stage schema took on a second operational role

beyond intake. The email invitation workflow — generating secure token-authenticated interview

links and tracking their delivery — is managed through

stage.interview_invite,

stage.email_job,

stage.email_send_failed, and

stage.contact_action.

Once an invitation is delivered and the respondent's role is confirmed, the formal invite record

is promoted to interview.invite, maintaining the natural-to-surrogate

key handoff that characterizes the broader architecture. The

stage.access table similarly handles token-based authentication

for role confirmation emails, with tokens keyed by uuid and

expiring on a configurable schedule.

The Complete Data Flow, Schema by Schema

Stepping back, the eight-schema architecture maps directly onto the app's workflow. Data enters in human form, is validated and staged, is promoted into formalized relational structures when the interview process begins, flows through the AI pipeline, and is synthesized into cross-organizational analysis. Each schema is a station on that pipeline.

Contact forms, organizational registrations, model requests. Natural composite primary keys. AI-assisted field enrichment (RAG columns for industry classification, role assignment). The entry point for all external data.

The formalized four-level hierarchy: parent → subsidiary →

business unit → role. All entities carry UUID surrogate keys assigned at promotion time.

client.interview links roles to their active discovery sessions.

Authored and approved discovery interview templates, organized

by industry, business function, and role. The canonical question set from which all

interview instances are derived. Promoted from stage.model.

Generated questions, respondent answers, in-progress answer tracking, follow-up question cycles, topic-level summaries, data flow mappings, solutions-in-use, and executive summaries. The heart of the app's AI pipeline.

Role-based and business-unit-based consolidation of interview summaries. GPT-4 synthesizes patterns across multiple respondents. Full provenance maintained via source summary and interview ID arrays.

Accounts, access tokens, billing, subscription state (Stripe), discovery plan definitions, project management, author credentials, and — critically — the database-driven prompt library that configures the AI engine at runtime.

Industry classifications (NAICS-derived, stored as 32-character hash IDs) and geographic locations. Populated at deployment time, shared across stage, client, and model schemas as foreign-key anchors.

In-progress summary control state, batch billing records, and admin account provisioning buffers. Data here is ephemeral by design — it exists only until promoted or cleared by the relevant workflow step.

The Schema Today — May 2026

The diagram below shows the complete database as of this writing. Compared to the July 2024 ERD above,

the growth is visible at a glance: eight schemas, a fully articulated organizational hierarchy in

client, the multi-stage AI pipeline captured across

interview and analysis, and a

stage schema that is clearly differentiated from everything downstream

of it — no foreign key lines crossing into it, because none exist in the database engine.

The relationships between staging tables and the rest of the system live in application logic, waiting

to be enforced by the database only after promotion.

Figure 2: kDS Discovery database schema — May 2026. Eight schemas, full relational graph in client and interview, and a constraint-free stage intake layer on the left.

What Two Years Produced

The kDS database today is not a general-purpose schema — it is a schema that knows what the app

does. Every structural decision reflects a step in the discovery workflow: the natural keys in

stage reflect the human intake process; the UUID promotion reflects

the moment the AI engine takes over; the jsonb columns throughout

interview reflect the richness of SME conversation; the provenance

arrays in analysis reflect the requirement that every insight be

traceable to its source interviews.

The toolchain that supports it has matured in parallel. dbdiagram.io serves as the visual modeling layer, exporting clean DDL that seeds the structural baseline of every deployment. dbForge Schema Compare generates the delta scripts that add stored procedures, views, and functions maintained in the development environment. A single bash deployment script applies both in sequence, producing a fully provisioned instance from a clean PostgreSQL install. LLMs, including Claude and ChatGPT, have proven to be productive collaborators on DBML and DDL work, since they understand both the modeling language and the design intent behind it.

The two-year arc from prototype to production reflects a principle that has guided kDS from the start: the database is not just storage for the app — it is the app's data flow, expressed in relational form. If you want to understand what the kDS DSD App does, read the schema.

Is All of This Necessary?

Yes — unequivocally. AI systems produce dramatically more reliable results when operating on

structured data, and every GPT-4 prompt the kDS DSD App sends is assembled from clean, typed, normalized

relational records: role descriptions, industry classifications, prompt templates, topic-level summaries.

When inputs are well-structured, outputs are proportionally more coherent and more defensible —

a language model cannot flag ambiguous or malformed context, it simply produces confident-sounding output

regardless. The multi-schema architecture also makes the analysis layer trustworthy and traceable: because

every source record passed through the same structured pipeline, findings in

analysis.by_role can always be walked back to the specific interviews

that produced them. That kind of provenance is not possible with unstructured documents or ad-hoc

spreadsheets. This is, in short, pre-agentic infrastructure — the structured foundation that AI

agents will require to act reliably on enterprise data, not just answer questions.

Interested in what this architecture can do for your organization's undocumented data landscape? Reach out at talk2us@keeshinds.com or visit keeshinds.com. We are actively onboarding select beta partners.